Diverse Enrollment Isn’t Subgroup Evidence in Clinical Trials

Based in Western Europe, I'm a tech enthusiast with a track record of successfully leading digital projects for both local and global companies.

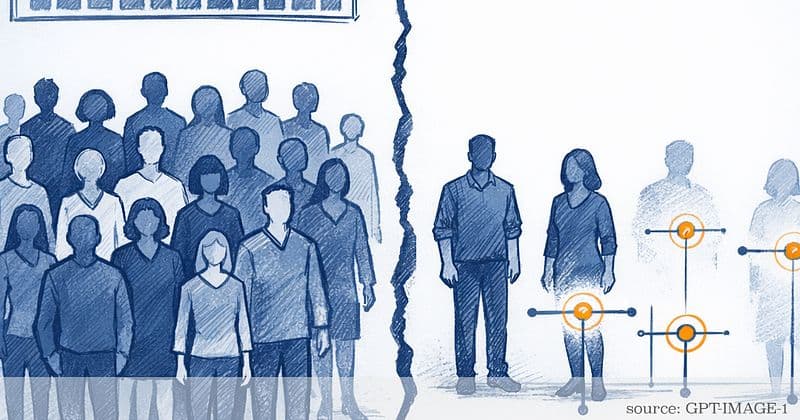

Clinical trials increasingly highlight “diverse enrollment” as proof that results apply to everyone. But a baseline table with more categories is not the same as evidence you can use when the question is specific: What are the benefits and harms for women of color, during pregnancy and postpartum, with PCOS, with endometriosis, or in perimenopause? The evidence often isn’t missing because no one was enrolled. It’s missing because the trial can’t reliably estimate subgroup effects, or because the paper doesn’t report the details needed to judge them.

This article separates two meanings of diversity that are often blurred. First is descriptive diversity: who showed up in the study (race/ethnicity, age, sex) and how transparently that’s reported (CONSORT 2010). Second is diversity as evidence, also called analytic diversity: whether the study can support decision-grade subgroup estimates—effect sizes with uncertainty, grounded in enough events—rather than leaving readers to guess from an “average effect” (Wang et al., 2007; Sun et al., 2012). Guidance from the National Academies and longstanding methodological critiques make the core point plain: inclusion targets do not automatically produce valid subgroup inference (Rothwell, 2005; National Academies, 2022).

You’ll also see why subgroup evidence so often breaks. Subgroup claims usually need more information than headline results (Brookes et al., 2004). Follow-up and missing outcomes can selectively erase the very participants a trial aimed to include (Rubin, 1976; Hernán et al., 2004). And “average effects” can hide meaningful differences in absolute benefit or harm when baseline risk isn’t evenly distributed across populations (ICH E9/E9(R1)). Along the way, this article validates a reality many women recognize: different baseline risks and lived contexts can change outcomes. Yet the honest scientific answer is sometimes “we don’t know yet,” not “there’s no difference.”

The goal is practical: a fast, reader-friendly framework for judging whether a study truly speaks to people like you, plus a clear evidence hierarchy—gold standard, promising, theoretical—so claims match the level of uncertainty. When I’m screening a trial for my “papers to practice” database, I treat subgroup reporting like non-negotiable—if the usable subgroup details aren’t there, I assume uncertainty, not equality. By the end, you’ll know what to look for in a trial report, and what to ask for when it’s missing, so “diversity” isn’t just a headline but usable evidence.

Enrollment Isn’t Evidence: Two Meanings of “Diversity” in Clinical Trials

Trials often claim they’re “diverse,” but that word can mean two different things.

Descriptive diversity is a demographic snapshot: how many participants of each race/ethnicity, age band, or sex appear in the baseline table. It’s necessary for transparency, but it is not the same as diversity as evidence: data that can support reliable benefit and harm estimates for women of color. National Academies guidance is clear that inclusion targets don’t automatically produce valid subgroup inference (National Academies, 2022). Rothwell also notes that “more representative” is not the same as “actionable for every subgroup” (Rothwell, 2005). CONSORT improves reporting of flow, baseline characteristics, and analyses (CONSORT 2010), but it can’t make a trial informative for subgroups.

A paper may report that 20% of participants were Black women but leave out what matters for decisions: (1) subgroup effect estimates, (2) event counts by arm within subgroup, and (3) uncertainty (confidence intervals). Without these, “diverse enrollment” can become a marketing claim rather than decision-grade evidence. In practice, that often looks like this: the baseline table is diverse, but the results section never shows event counts or confidence intervals by subgroup. Readers are asked to assume the average effect applies equally. Partial subgroup reporting can also mislead when “not statistically significant in subgroup” is treated as “no difference,” a known interpretive error (Wang et al., 2007).

Analytic diversity: can the trial answer subgroup questions?

Analytic diversity is the study’s ability to estimate treatment effects within subgroups with uncertainty narrow enough to matter. ICH E9 emphasizes that confirmatory claims require prespecification and a clear definition of what’s being estimated. Unplanned subgroup analyses are usually exploratory (ICH E9; ICH E9(R1)). Sun et al. outline credibility criteria, including prespecification, rationale, and consistency, that help separate believable subgroup findings from noise (Sun et al., 2012). A useful way to think about it: underpowered subgroup analyses can look more convincing than they are.

Subgroup questions are about differences between groups, not two separate “did it work here?” verdicts. The common trap is treating “significant in one group, not significant in another” as proof of a difference. More credible subgroup claims show (a) the subgroup-specific estimates with uncertainty and (b) a direct check of whether effects differ across groups (Sun et al., 2012; Wang et al., 2007). When many subgroups and outcomes are tested, false positives become easier to generate unless authors are transparent about what was planned versus explored—another reason prespecification matters.

A practical evidence hierarchy helps keep claims aligned with uncertainty:

- Gold standard: Prespecified subgroup analysis with adequate precision and/or replication across studies. Confirmatory intent is defensible (ICH E9/E9(R1)).

- Promising: Prespecified but imprecise results, or exploratory signals that repeat across datasets. This supports follow-up studies rather than confident clinical claims.

- Theoretical: Mechanistic plausibility (for example, baseline risk differences affecting absolute benefit). Useful for hypotheses, not proof.

Why subgroup evidence breaks: power, missingness, and the “average effect” trap

Power: “no heterogeneity” often means “not enough information”

The phrase “no heterogeneity by race/ethnicity” often deserves scrutiny. Detecting subgroup differences generally requires far more information than detecting the main effect. A common rule of thumb is about 4× the sample size to detect an interaction with similar reliability when subgroup sizes are balanced (Brookes et al., 2004). When one subgroup is smaller or events are rare, uncertainty widens further. CONSORT expects effect estimates with precision (Item 17a), but if subgroup confidence intervals aren’t shown, readers can’t tell whether “no heterogeneity” reflects true similarity or wide uncertainty (CONSORT 2010).

Baseline risk differences make this especially relevant in women’s health. Even if relative effects are similar, absolute benefit or harm depends on baseline risk, which is not evenly distributed across populations (CDC NVSS; HCUP; NHANES). So when baseline risk plausibly differs, don’t stop at relative effects: look for absolute risks (or absolute risk differences) by subgroup, or enough subgroup outcome counts to compute them. If the paper won’t show that, it’s telling you something about how portable its headline result really is.

Missingness: dropout can turn “randomized” into “whoever could stay”

Missing outcome data can quietly remove the very participants a trial aimed to include. If follow-up visits are harder to attend because of shift work, childcare, transport, or feeling dismissed in care, the results can end up reflecting only the women who could keep showing up—often not the women at highest baseline risk (Hernán et al., 2004; Hernán & Robins, 2020). In plain terms: when one group is more likely to disappear from the dataset, the trial can start answering a different question than the one you thought you were reading.

Rubin’s framework highlights why MNAR (missing not at random) is high-risk: assumptions become largely untestable from observed data (Rubin, 1976; Little & Rubin, 2002). In plain English: the data you do have can’t tell you whether the people who disappeared would have done better or worse.

Common shortcuts like complete-case analysis or last observation carried forward can both bias effects and misstate uncertainty (Sterne et al., 2009; Rubin, 1987). Guidance recommends making missing-data assumptions explicit and using sensitivity analyses when MNAR is plausible (National Research Council, 2010).

The “average effect” trap: one headline result becomes a universal recommendation

Trials and guidelines often highlight a single primary effect, while subgroup absolute effects go unreported even when baseline risk differs (ICH E9(R1); Rothwell, 2005). A simple translation: if baseline risk is 2% in one group and 10% in another, the same 20% relative risk reduction yields 0.4 percentage points absolute reduction versus 2 percentage points. That is a different benefit and harm tradeoff. When subgroup tables are missing, it’s usually missing information, not evidence of equality.

Two things can be true at once: current evidence may be too imprecise to determine whether effects differ for women of color, and lived experience of differing responses and baseline risks is real. If you’ve ever been told your symptoms are “normal” while the evidence can’t even estimate your subgroup, that disconnect isn’t in your head—it’s in the data. The right scientific response is to treat that experience as hypothesis-generating, not to dismiss it.

When race/ethnicity data exists but still can’t carry evidence

Collapsed labels (“Asian,” “Hispanic”) pool very different populations. The label is not a biological mechanism. OMB categories were built for consistent reporting and civil rights monitoring, not biological inference (OMB Directive 15; revised 2024). Even if you’re in the UK, these US categories often shape multinational trials and journal reporting, so they show up in the papers you read. Journals increasingly expect authors to define how race/ethnicity were measured and why they were used (AMA Manual of Style; Flanagin et al., 2021).

Evidence also fails to accumulate when measurement isn’t harmonized: single-select versus multi-select, conflating race and ethnicity versus separate fields, and treating “unknown/declined/not collected” as generic missingness. Standards like CDISC controlled terminology (via NCI EVS), HL7 FHIR / US Core Race & Ethnicity, and NIH Common Data Elements aim to reduce this drift.

A related interpretive error is biologizing race. Race/ethnicity often functions as a proxy for lived context and structural exposures, and sometimes ancestry-related variation, not a causal biological variable (AAPA, 2019). Vyas et al. describe how race “corrections” in algorithms can shift care away from groups already at higher risk (Vyas et al., 2020). A practical alternative is to measure the mechanism: genotype or ancestry markers for biological hypotheses, or specific social exposures for social hypotheses.

A rapid reader’s framework: does this study speak to people like you?

Highest-yield move: ignore subgroup p-values and go straight to whether the paper gives you the subgroup minimum viable dataset—Ns, event counts, effect estimate + CI, and a direct check of whether effects differ across groups.

Quick screen:

- Context/definitions: How was race/ethnicity measured and defined (self-report, separate fields, multi-select)?

- Gold-standard reporting signals: Are subgroup Ns and event counts shown by arm?

- Gold-standard reporting signals: Were subgroup analyses prespecified (SPIRIT Item 20)? (Look in the trial registry/protocol for subgroup hypotheses listed under “Statistical analysis plan” or “Subgroup analyses,” dated before recruitment ends.)

- Gold-standard reporting signals: Is there a direct test/check of whether effects differ by race/ethnicity (rather than “significant here, not there”)?

- Gold-standard reporting signals: Are subgroup effect estimates with confidence intervals reported?

- Credibility/robustness signals: Is multiplicity acknowledged (many subgroups/outcomes tested)?

- Credibility/robustness signals: Is attrition and missingness transparent, with assumptions and sensitivity analyses when needed?

- Interpretability signal: Are results truly reported by subgroup (not merely “adjusted for race”)?

A quick example (postpartum): if you’re reading a trial that claims relevance to postpartum women, check whether postpartum participants are actually visible in the subgroup Ns and outcome counts—especially if follow-up runs through the newborn period. Then scan for unequal dropout: if one group has much higher loss to follow-up postpartum, the headline result may mostly reflect the people for whom appointments and follow-up were easiest to sustain.

What better research looks like

To turn “diverse enrollment” into decision-grade evidence, subgroup questions have to be treated as design requirements. That means prespecifying key subgroup hypotheses, planning for precision (not just total N), and reporting the subgroup minimum viable dataset (enough to judge both effect size and uncertainty, and whether effects differ across groups). Regulators encourage broader enrollment to improve generalizability, but diversity alone is not a shortcut to confirmatory subgroup claims (FDA, 2020).

The practical gap is counts versus conclusions. If there’s one reader move that pressures the system toward better science, it’s asking for the subgroup minimum viable dataset, not just a diverse baseline table.

“Diverse enrollment” is a starting point, not proof that a result applies to every woman across pregnancy and postpartum, PCOS, endometriosis, or perimenopause. The question to keep in your pocket is simple: does this paper show, or merely imply, what happens in the subgroup it claims to represent? In real papers, the answer is often hiding in the appendix or supplementary tables—while the main text sticks to one average headline. A quiet red flag is when race/ethnicity appears only as an “adjusted-for” covariate, with no subgroup results you can evaluate.

What’s one trial reporting detail you now plan to check, or ask for, before trusting a “diverse” headline?